5 Minute Primer to Technical SEO

Published on 28 Apr 2022 inIf a page’s contents are the actors on stage, technical SEO is the crew behind the curtains. Just like how lighting and sound technicians give actors their time in the spotlight, technical SEO allows the on-page content to be accessed and seen.

This guide will cover the basics of technical SEO. As one of the leading SEO companies in Bangkok, we will introduce you to technical SEO, the role it plays behind the workings of your page and some of the things you should look out for if you want to get started on getting your page up and running.

What is technical SEO?

Technical SEO refers to the optimisation of the technical aspects of a website to raise its ranking on the search engine results page.

This involves optimising the site for ease of access for the users as well as for the search engine crawler to go through. Technical SEO is part of on-site SEO, which means it handles elements on your site to raise the site ranking.

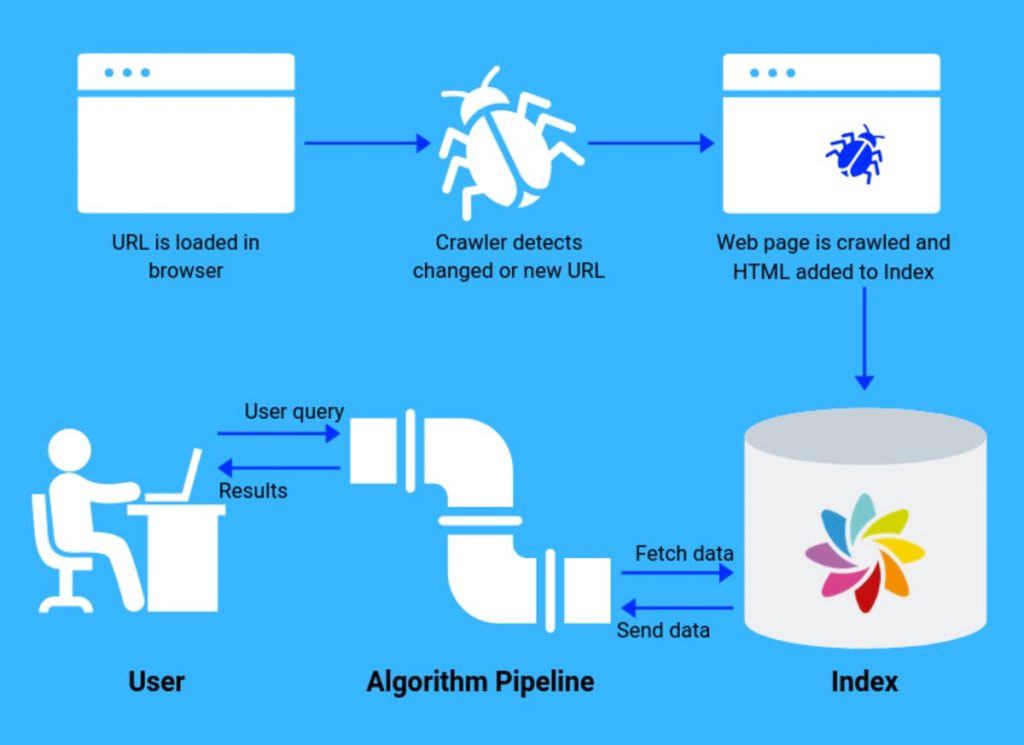

You may be wondering what we are referring to when talking about a search engine crawler, let’s use Google as an example.

Before a website can rank on the first page of Google, the Google bot must first be able to visit (aka crawl) the website and store information about the site in its index. You can read more about how Google crawling and indexing works here.

An example of technical SEO would be internal links. When there are many broken links within your website, it could lead to crawlability issues, as well as damaging the overall user experience

If you are finding yourself lost and need a crash course on what SEO is, we’ve got a guide to help you out.

Why is technical SEO important?

No one likes seeing 404 errors when trying to access a page, not even the search engine crawler.

Part of technical SEO is making sure this does not happen when it matters, among the many other things it handles.

Ensuring that your website can be navigated smoothly as well as reducing the number of dead links plays a role in how well the site is received by the search crawler.

A good, clear example of this is how google crawler understands websites. Google has a bot that ‘crawls’ through websites to evaluate web pages.

Let’s assume that there is a page on a site that it has found but it takes clicking through 8 links to get to the main page. The bot will evaluate it lower and as a result, the page was found on the 11th page of the search results on google.

Very few people would search that far casually, which means less traffic to the site regardless of how good the content is. In fact, less than 1% of users click on results found on the second page alone.

To make matters worse, the site takes several minutes to fully load and some of the links don’t work properly. Some content on the site can’t be viewed via mobile devices and the clickthrough rate is being split between 3 duplicates of the landing page.

That is not how you want things to work out for your site.

9 things to look out for in technical SEO audits

There are many factors which affect a site’s ranking and how well it performs, listed here are some important things when doing a technical SEO audit.

1. Robots.txt

This particular file sounds exactly like what it does. It addresses the bots that will be crawling the site, a set of instructions and variables to define where the bot can go.

The importance of this is in making sure the bot can crawl the important parts of the site. A mistake in the code of this file could prevent the bot from accessing the site, thus preventing it from seeing and recognising the keywords and content inside.

You could also prevent the crawler from accessing certain pages on your site for whatever reason you want to do this. Here’s a quick tool you can use to check if you’ve made a mistake setting up your robots.txt.

2. XML sitemap

This is another thing that helps out the crawling bot going through your site. XML sitemaps tell the crawler what is important on the site, the relationships between the pages, and provides information such as how often the page is updated.

An XML sitemap is most helpful when:

The site is very large

The site contains a lot of media content such as images and videos

The site is not well linked internally

The site is new and does not have a lot of external links pointing to it (aka backlinks)

3. Site speed

The speed at which users load your site is a very important part of technical SEO as many factors affect this.

If the site speed is slow, users have to take a lot of time loading the pages they go to, resulting in people leaving the site for a more easily accessible one. Google also takes the site speed into account when ranking the site, the faster it is the better the bot will score this.

Here are some things that affect a site’s speed, be sure to check if you’ve overdone it on some of these:

CSS and JavaScript usage: heavy use of scripts will slow down the page.

Server hosting: speed of server host determines the maximum speed of a site.

Plugins: having too many will slow down the page load speed.

Coding: poor coding will slow down or even stop the page from running. Conversely, lean, well-coded pages will run smoothly.

4. Duplicate content

Duplicate content refers to copies of a page that is the same or very similar to an existing page. This causes the duplicate page to compete with the main page for both search engine indexing and user visit count.

This means that all the copies of the page will be competing with each other on link metrics and as a result, lower the performance of all of them. Common causes of this are errors in setting up and indexing URLs, some examples are listed below.

Exposed index.php directory:

When websites have their index.php directory exposed, it could cause duplicate content issues. For instance, these two URLs will have identical content despite being separate pages:

https://example.com/index.php

https://example.com/

URL variations not redirected:

Duplicate content issues can occur when different variations of a URL are not being redirected to the canonical version. For instance, the two URLs below:

https://www.example.com/

http://example.com/

Checking for duplicate content should be done routinely as you update and add pages to your site.

5. SSL certificate

SSL certificates allow the use of the HTTPS status code, which is taken into account by the google bot and so affects the ranking of the page. HTTPS is the secure form of HTTP, which is also tagged by many web browsers as ‘not secure’ and may dissuade potential users from visiting.

An SSL certificate also protects the site from attacks such as spoofing and creating fake versions of the site.

Thus it is better, and more secure, to use HTTPS if you want to boost your page ranking to remain competitive on the search results page. Google also prefers HTTPS sites and will rank them preferentially.

6. Structured data

Structured data is a way of making your site easier for search engines to understand and to attract users to the page. An example of this is displaying product prices and its rating on the search results description.

The way it does this is through the use of some of the languages that could be found on schema.org, which the search engines use. The format JSON-LD is the one that google prefers and doesn’t break your site as easily.

Adding structured data to your site will allow snippets such as the one above to show on the search results page.

7. Mobile-friendliness

The consumption of content via mobile devices is becoming more and more common, meaning that a good chunk of users will be accessing your site and pages from their phones and tablets.

For this reason, your site and pages must be easy to use and access from said devices. Furthermore, Google has also announced its mobile-first indexing policy, meaning that Google bot will now crawl and index the mobile version of your website.

An easy way to check whether or not your site design is mobile friendly is by accessing it from a mobile device or using a mobile emulator.

There are many ways to do this, each with their pros and cons. Here are some examples of what you could do:

Making a mobile version of the site: Sites with an m. in front usually indicate that they are mobile versions.

Responsive Web apps: creating a separate app or a progressive app for accessing sites from mobile devices.

Responsive web design: fluid and flexible site design allows for the development of a website that adapts to any browser of any size.

Often a combination of such methods is used to create fluid access and navigation for mobile users.

8. Register your site with google

So you’ve developed a very nice site and it just went live, but the google crawler is taking its time to get to the site. Rather than wait for weeks or months for the crawler to find and index the site, you can submit your site to Google to expedite this process.

One way of doing this is simply to register and verify your site on the Google Search Console, then submit a sitemap.

Another way is using Google’s URL inspection tool, which allows you to fetch and request indexing for individual URLs.

9. Link problems

Links are a very helpful tool to help your site reach more users and also help users to navigate your site. Internal links are essential in site navigation, a site landing page will inevitably need a lot of links to make sure all the site’s pages can be reached.

The problem arises when some of these links don’t work properly, resulting in users being unable to go to and from certain pages. There are many ways links can go wrong, two of the more common examples of this are:

Broken links: some of the most recognisable are 404 errors, which are links that direct the user to a page that has been deleted or moved elsewhere. You can check for them easily with a dead link checker.

Nofollow internal links: links with nofollow attributes will not pass PageRank, meaning that if internal links have nofollow attributes to them, many pages on your site can lose its organic rankings.

Getting started

So you’ve familiarised yourself with what technical SEO is and you want to dive in deeper, here is an in-depth guide on technical SEO that you may find helpful to go through. We understand it’s difficult to wrap your head around such a complex field, especially if you are trying to apply it to your site by yourself.

Here at Morphosis, we offer expert SEO consultation and services. Feel free to contact us whether it is technical SEO, keyword research, SEO content and UX strategies you are having trouble with.

Subscribe to our newsletter.

Here are some related articles

Digital Marketing

Let us will help you open new business opportunities by giving you a new perspective on your digital product you may not have considered before.